Not one, but two 1-800 numbers called us yesterday. We didn’t answer either (obviously), but thankfully they left voice mails. It seems a multilingual robot lady was trying to get in touch. We don’t know what about, but it seemed kind of urgent. There were instructions to press buttons and speak to operators. She’ll probably call back. We don’t know if we’ll answer, but we’re still kind of hoping she’ll call. We’re curious what she wants and how those wants might involve us.

These calls got us wondering about whether robots were using other outmoded means of communication. Are there roomfuls of robots faxing people? Are people faxing the robots back? Is there a robot out there writing long-hand letters, folding the paper just so, sealing the envelope (with its machine tongue?), affixing a stamp (also with tongue?), and getting its bionic body over to the… place where you put the letters? We want to say “postal can”?

You might think a letterbot going to the postal can is all fine and good, harmless even, but what if the letterbots get so skilled that you can no longer clearly distinguish a letterbot letter from a humanperson letter? And what if they become so prevalent that no humanpersons even bother to write long-form letters anymore? What then?

And, more seriously, would you mind? Who doesn’t like mail?

Maybe the letterbots are very eloquent and sincere-seeming and go through the extra trouble of doing like little designs around the borders of the page. Maybe these letters address topics or ideas that you find yourself particular invested in and are rarely if ever addressed elsewhere by humanpersons. Maybe these letters arrive on Sunday afternoons when you’re feeling a little out of sorts and they offer some strange kind of respite from the general malaise that comes from knowledge of another impending week. Maybe you reply, half-jokingly, to some of the letterbots’ letters and it learns a bit about you, refines how it writes and what it writes about. Maybe eventually, though you would never admit it to anyone, these letters are very close to exactly what you need right now and even though you know the letterbot is just a letterbot you feel something like gratitude for the letters it sends while remaining a little suspicious about why exactly it keeps offering up these letters for nothing.

A version of this last scenario - although, sadly, having nothing to do with letterbots - was discussed with some poignancy on Wednesday afternoon at McGill by Prof. Marisa Parham of the University of Maryland at College Park. She was invited by the Department of English to deliver the annual Spector Lecture (no idea, but it’s fun to say aloud) and gave a talk titled “Black Living + Other Computational Poetics.”

Over the course of sixty or so minutes, Prof. Parham addressed a wealth of issues centered largely on the ways that small and large language models turn raw data (e.g. the decontextualized banks of words or images) into information (e.g. a sensible or meaning-rich text or images) by way of an interpretive act guided by a user or algorithm. This process of turning unsorted data into information through an opaque hermeneutic/heuristic procedure, for Parham, seems to serve as not only a literal description of what certain AI’s are up to, but a particularly helpful metaphor for understanding certain aspects of Black subject formation and expressivity (which she refers to under the umbrella concept of Black digitality).

Parham’s talk that was rich with citation (from Timnit Gebru et al.’s cautionary paper on the “unfathomable” biases and prejudices in LLM data sets in “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” to Lillian-Yvonne Bertram’s small-language-model poetics in Travesty Generator to Denise Ferreira da Silva’s discussion of the objectification of desire in her forward to Harney and Moten’s All Incomplete to Morisson et al.’s collage-like assemblage of archival texts/images in The Black Book to Hurston’s “Characteristics of Negro Expression” to Baldwin’s “Sonny’s Blues”). Our notes, then, are at best partial - so please keep that in mind in what follows.

In some ways, Parham’s talk enacted what it discussed. Black digitality - as far as we understood - arises as an immanent expressive response to the interpolative force of over-abundant, affectively chaotic multimedia presented across contemporary life. Or, in other words, who you are and how you express yourself in the world is partly a result of all the dumb, funny, touching, fucked up, offensive, derogatory, insightful, maddening, and deranged shit you are faced with on the daily. This talk, then, offered up a carefully curated selection of materials that confronted, positioned, and called on us (and now you) to process and somehow respond to particularly.

We aren’t going to summarize the entire talk, but want instead to focus on a moment that, we think, casts a different and interesting light on all the (honestly exhausting) conversations about artificial intelligence that have been happening lately.

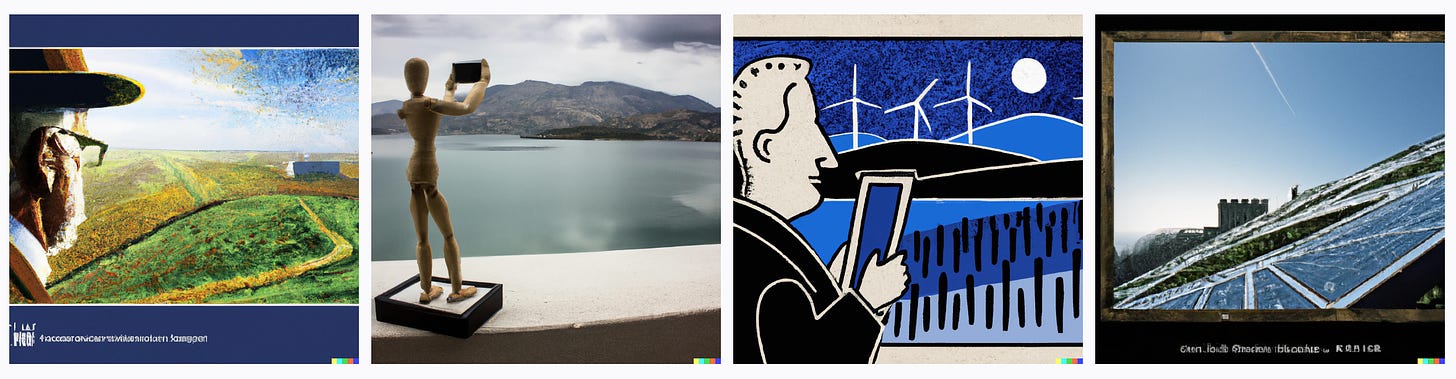

After working through the textual examples mentioned above, Parham noted that she had an abiding interest in the history of Black people swimming. The curious ways that - growing up in Chicago - she was able to swim in Lake Michigan during the summer while many Black communities elsewhere in the US, without access to bodies of swimmable water by dint of geography or public pools by dint of segregation laws, could not and did not learn to swim. She’d written a piece, “Like Sand Through the Hourglass,” for Public Books that touched on the topic and, after that, used this interest as a prompt for DALL-E (an tool based on ChatGPT that transforms a user’s textual description into visual images).

What she finds reflected in these images, she notes, are the “contours of her desire.” DALL-E has, in its own way, given her what she wanted. There is an emotional charge to the photos even if no emotional language was included in the prompt, no emotional stuff went into making the images, and no emotional agents are captured in the images. “I don’t look for the imperfections, the problems,” Parham remarked, “- but rather look to see what I wanted to find.” This is, then, an uncanny (or, in Parham’s particular sense, haunting) experience. The images feel familiar and strange, apt and odd, at once. The process by which these images are made, the various biases and prejudices implicit or explicit in the material referenced, and the subtle ways that the images are wrong or flawed are, momentarily, immaterial or obscure.

Faced with DALL-E’s output, one needs to consciously or deliberately bring certain problems or complications back into view - but only if you want to. The viewer or user is, then, positioned at a certain interpretive juncture by the image. The image is responding to a request, but also - in its own way - requesting a response. This, then, kicks out a host of difficult questions.

Do we really often desire to undermine the satisfaction of our desires? Do we always or everywhere have the wherewithal to push back against what seems like just the thing we wanted or, at least, thought we wanted? Beyond being faced with just some image, how ought one respond to the possibilities promised by the tools that create these images? Or the inevitability of world that comes to rely on or make use of these such tools regularly? Will our desires shift because or for the sake of these subtle machines? That is, might we learn to edit or alter our desires to better facilitate the machine’s work? And, if so, are our desires now its? Vice versa?

The questions and concerns that Parham raised regarding these pseudo-photos did not strike us as alarmist or reactionary. Nor did it seem like Parham was keeping some unshared value judgment up her sleeve. Instead, the ethical and political significance of this kind of affective and interpretive irresolution was left to linger. This, to us, was significant in itself. There were neither utopian nor dystopian proclamations. Nor was the impact of these tools reduced or framed as principally economic. Instead, we were left to interpret - in whatever ways we might - the more idiosyncratic, personal, and complex ways these tools orient or disorient us particularly and how those small scale (dis)orientations might influence the formation or dissolution of larger social and political structures.

While Parham did not discuss this explicitly, implicit in this concluding part of her talk was the promise that tools like DALL-E will eventually be able to deliver ersatz evidence of one’s own life. A model could be trained on photos of you, your loved ones, the places you lived, objects you interacted with, etc. such that you might ask it to show you a version of your life that was never documented. It wouldn’t be a perfect representation of the past, but then again memories aren’t perfect representations of the past either. Or, divorced from lived experience, these tools might be able to offer up depictions of possible futures and pasts. What if we hadn’t passed that test? Suffered that injury? Moved to that place? Said those words? We might be able to see futures and pasts that can’t have been or won’t come to be. The represented futures and pasts we are given might not be real, but in what respect is the future or undocumented past real in the first place?

Would we, when prompted by seemingly impossible gifts (?), have the desire or drive to keep the technical and ideological behind-the-scenes work in sight? Would we, to return to our loyal letterbot, mark these letters return to sender? These are open questions that may not now or ever give way to final, resolute answers - but that might itself might be a feature (not a bug) inherent to spending our days - past, present, and future - in a digital (or digitalized) world.

The multilingual robot lady from that 1-800 number hasn’t called yet. We still don’t know if we’ll answer, but we’re waiting. We’re still curious about what she wants, how those wants might involve us, and how we might respond.

[All the images across this essay were created by DALL-E. The prompts are provided in the caption in quotations. Note that while we used similar prompts as Prof. Parham in the instances of “Black children playing alongside a large lake” and “African American children playing alongside Lake Michigan,” these were not the same images shown in the talk.]

For fun (?), we asked DALL-E to produce a self-portait. This is what they offered.

Another good one. 1-800 calls can be entertaining.

lol DALL-E your self-portrait